Cluster wild bootstrapping to handle dependent effect sizes in meta-analysis with a small number of studies

Abstract

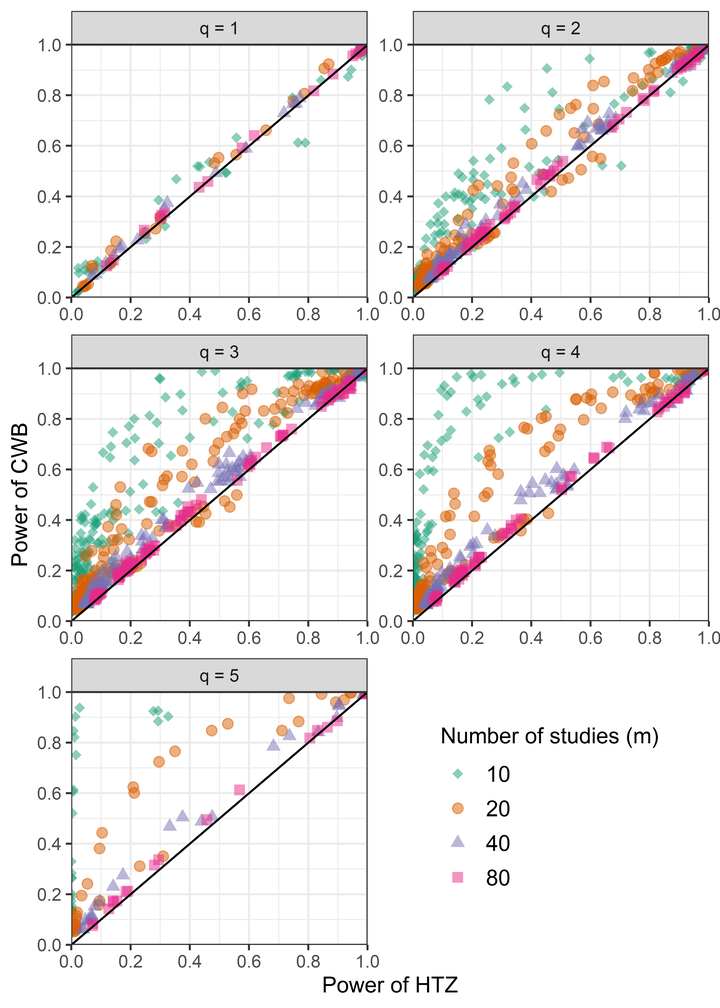

The most common and well-known meta-regression models work under the assumption that there is only one effect size estimate per study and that the estimates are independent. However, meta-analytic reviews of social science research often include multiple effect size estimates per primary study, leading to dependence in the estimates. Some meta-analyses also include multiple studies conducted by the same lab or investigator, creating another potential source of dependence. An increasingly popular method to handle dependence is robust variance estimation (RVE), but this method can result in inflated Type I error rates when the number of studies is small. Small-sample correction methods for RVE have been shown to control Type I error rates adequately but may be overly conservative, especially for tests of multiple-contrast hypotheses. We evaluated an alternative method for handling dependence, cluster wild bootstrapping, which has been examined in the econometrics literature but not in the context of meta-analysis. Results from two simulation studies indicate that cluster wild bootstrapping maintains adequate Type I error rates and provides more power than extant small sample correction methods, particularly for multiple-contrast hypothesis tests. We recommend using cluster wild bootstrapping to conduct hypothesis tests for meta-analyses with a small number of studies. We have also created an R package that implements such tests.